Face Generation

This tutorial was part of the projects for the Deep Learning Nanodegree at Udacity. In this project, we’ll define and trained a Deep Convolutional Generative Adversarial Networks(DCGAN) on a dataset of faces. The goal of this project is to get a generator network to generate new images of faces that look as realistic as possible. The project will be broken down into a series of tasks from loading in data to defining and training adversarial networks.

The model is trained on the CelebFaces Attributes Dataset (CelebA)

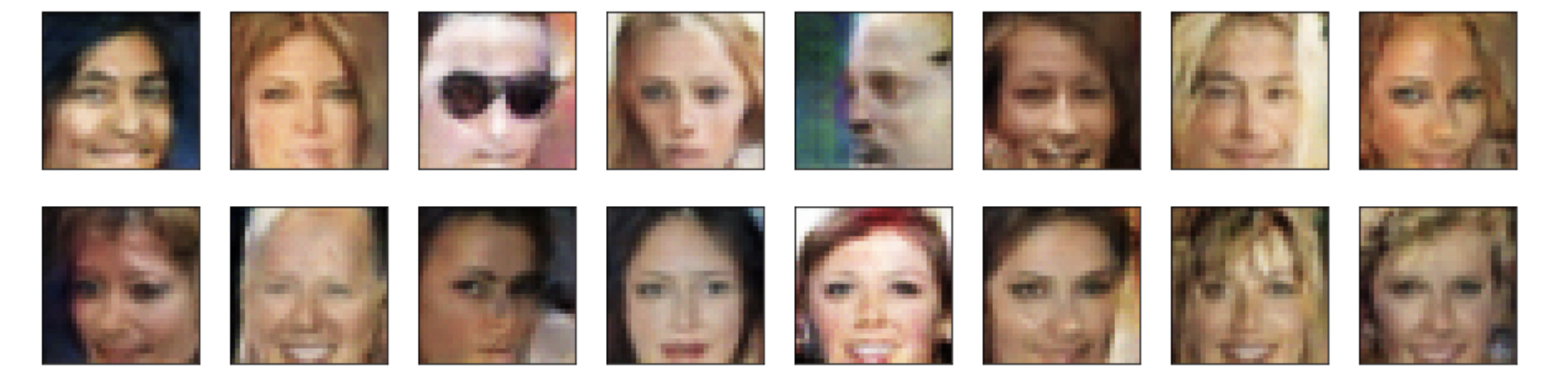

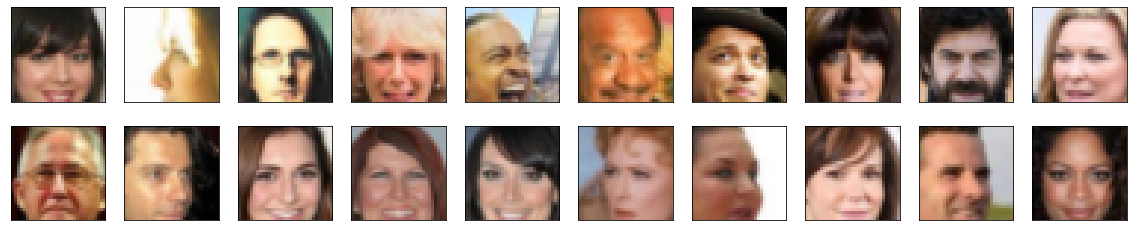

The output is new human faces such as:

You can also clone the repo on Github

Tutorial time

We will define and train a DCGAN on a dataset of faces. Your goal is to get a generator network to generate new images of faces that look as realistic as possible!

The project will be broken down into a series of tasks from loading in data to defining and training adversarial networks. At the end of the notebook, you’ll be able to visualize the results of your trained Generator to see how it performs; your generated samples should look like fairly realistic faces with small amounts of noise.

Get the Data

You’ll be using the CelebFaces Attributes Dataset (CelebA) to train your adversarial networks.

This dataset is more complex than the number datasets (like MNIST or SVHN) you’ve been working with, and so, you should prepare to define deeper networks and train them for a longer time to get good results. It is suggested that you utilize a GPU for training.

Pre-processed Data

Since the project’s main focus is on building the GANs, we’ve done some of the pre-processing for you. Each of the CelebA images has been cropped to remove parts of the image that don’t include a face, then resized down to 64x64x3 NumPy images. Some sample data is show below.

If you are working locally, you can download this data by clicking here

This is a zip file that you’ll need to extract in the home directory of this notebook for further loading and processing. After extracting the data, you should be left with a directory of data processed_celeba_small/

# ignore warnings

import warnings

warnings.filterwarnings("ignore")

# can comment out after executing

!unzip processed_celeba_small.zip

unzip: cannot find or open processed_celeba_small.zip, processed_celeba_small.zip.zip or processed_celeba_small.zip.ZIP.

data_dir = 'processed_celeba_small/'

import pickle as pkl

import matplotlib.pyplot as plt

import numpy as np

import problem_unittests as tests

#import helper

%matplotlib inline

Visualize the CelebA Data

The CelebA dataset contains over 200,000 celebrity images with annotations. Since you’re going to be generating faces, you won’t need the annotations, you’ll only need the images. Note that these are color images with 3 color channels (RGB) each.

Pre-process and Load the Data

Since the project’s main focus is on building the GANs, we’ve done some of the pre-processing for you. Each of the CelebA images has been cropped to remove parts of the image that don’t include a face, then resized down to 64x64x3 NumPy images. This pre-processed dataset is a smaller subset of the very large CelebA data.

There are a few other steps that you’ll need to transform this data and create a DataLoader.

ImageFolder

To create a dataset given a directory of images, it’s recommended that you use PyTorch’s ImageFolder wrapper, with a root directory processed_celeba_small/ and data transformation passed in.

# necessary imports

import torch

from torchvision import datasets

from torchvision import transforms

def get_dataloader(batch_size, image_size, data_dir='processed_celeba_small/'):

"""

Batch the neural network data using DataLoader

:param batch_size: The size of each batch; the number of images in a batch

:param img_size: The square size of the image data (x, y)

:param data_dir: Directory where image data is located

:return: DataLoader with batched data

"""

transform = transforms.Compose([transforms.Resize(image_size),

transforms.ToTensor()])

image_dataset = datasets.ImageFolder(data_dir, transform)

return torch.utils.data.DataLoader(image_dataset, batch_size = batch_size, shuffle=True)

Create a DataLoader

Create a DataLoader celeba_train_loader with appropriate hyperparameters.

Call the above function and create a dataloader to view images.

- You can decide on any reasonable

batch_sizeparameter - Your

image_sizemust be32. Resizing the data to a smaller size will make for faster training, while still creating convincing images of faces!

# Define function hyperparameters

batch_size = 128

img_size = 32

"""

DON'T MODIFY ANYTHING IN THIS CELL THAT IS BELOW THIS LINE

"""

# Call your function and get a dataloader

celeba_train_loader = get_dataloader(batch_size, img_size)

Next, you can view some images! You should see square images of somewhat-centered faces.

Note: You’ll need to convert the Tensor images into a NumPy type and transpose the dimensions to correctly display an image, suggested imshow code is below, but it may not be perfect.

# helper display function

def imshow(img):

npimg = img.numpy()

plt.imshow(np.transpose(npimg, (1, 2, 0)))

# obtain one batch of training images

dataiter = iter(celeba_train_loader)

images, _ = dataiter.next() # _ for no labels

# plot the images in the batch, along with the corresponding labels

fig = plt.figure(figsize=(20, 4))

plot_size=20

for idx in np.arange(plot_size):

ax = fig.add_subplot(2, plot_size/2, idx+1, xticks=[], yticks=[])

imshow(images[idx])

Pre-process your image data and scale it to a pixel range of -1 to 1

You need to do a bit of pre-processing; you know that the output of a tanh activated generator will contain pixel values in a range from -1 to 1, and so, we need to rescale our training images to a range of -1 to 1. (Right now, they are in a range from 0-1.)

# TODO: Complete the scale function

def scale(x, feature_range=(-1, 1)):

''' Scale takes in an image x and returns that image, scaled

with a feature_range of pixel values from -1 to 1.

This function assumes that the input x is already scaled from 0-1.'''

# assume x is scaled to (0, 1)

# scale to feature_range and return scaled x

min, max = feature_range

return x * (max -min) + min

# check scaled range

# should be close to -1 to 1

img = images[0]

scaled_img = scale(img)

print('Min: ', scaled_img.min())

print('Max: ', scaled_img.max())

Min: tensor(-0.9137)

Max: tensor(0.8745)

Define the Model

A GAN is composed of two adversarial networks, a discriminator and a generator.

Discriminator

Your first task will be to define the discriminator. This is a convolutional classifier like you’ve built before, only without any maxpooling layers. To deal with this complex data, it’s suggested you use a deep network with normalization. You are also allowed to create any helper functions that may be useful.

Complete the Discriminator class

- The inputs to the discriminator are 32x32x3 tensor images

- The output should be a single value that will indicate whether a given image is real or fake

import torch.nn as nn

import torch.nn.functional as F

def conv(in_channels, out_channels, kernel_size, stride=2, padding = 1, batch_norm= True):

layers = []

conv_layer = nn.Conv2d(in_channels=in_channels, out_channels=out_channels,

kernel_size = kernel_size, stride = stride, padding = padding, bias = False)

layers.append(conv_layer)

if batch_norm:

layers.append(nn.BatchNorm2d(out_channels))

return nn.Sequential(*layers)

class Discriminator(nn.Module):

def __init__(self, conv_dim):

"""

Initialize the Discriminator Module

:param conv_dim: The depth of the first convolutional layer

"""

super(Discriminator, self).__init__()

self.conv_dim = conv_dim

self.conv1 = conv(3, conv_dim, 4, batch_norm=False) # (16, 16, conv_dim)

self.conv2 = conv(conv_dim, conv_dim*2, 4) # (8, 8, conv_dim*2)

self.conv3 = conv(conv_dim*2, conv_dim*4, 4) # (4, 4, conv_dim*4)

self.conv4 = conv(conv_dim*4, conv_dim*8, 4) # (2, 2, conv_dim*8)

self.classifier = nn.Linear(conv_dim*8*2*2, 1)

def forward(self, x):

"""

Forward propagation of the neural network

:param x: The input to the neural network

:return: Discriminator logits; the output of the neural network

"""

# define feedforward behavior

# Leaky ReLU can have an advantage over ReLU

# https://www.reddit.com/r/MachineLearning/comments/4znzvo/what_are_the_advantages_of_relu_over_the/

out = F.leaky_relu(self.conv1(x), 0.2)

out = F.leaky_relu(self.conv2(out), 0.2)

out = F.leaky_relu(self.conv3(out), 0.2)

out = F.leaky_relu(self.conv4(out), 0.2)

out = out.view(-1, self.conv_dim*8*2*2)

out = self.classifier(out)

return out

"""

DON'T MODIFY ANYTHING IN THIS CELL THAT IS BELOW THIS LINE

"""

tests.test_discriminator(Discriminator)

Tests Passed

Generator

The generator should upsample an input and generate a new image of the same size as our training data 32x32x3. This should be mostly transpose convolutional layers with normalization applied to the outputs.

def deconv(in_channels, out_channels, kernel_size, stride=2, padding=1, batch_norm=True):

layers = []

layers.append(nn.ConvTranspose2d(in_channels, out_channels, kernel_size, stride, padding, bias=False))

if batch_norm:

layers.append(nn.BatchNorm2d(out_channels))

return nn.Sequential(*layers)

class Generator(nn.Module):

def __init__(self, z_size, conv_dim):

"""

Initialize the Generator Module

:param z_size: The length of the input latent vector, z

:param conv_dim: The depth of the inputs to the *last* transpose convolutional layer

"""

super(Generator, self).__init__()

self.conv_dim = conv_dim

self.fc = nn.Linear(z_size, conv_dim*8*2*2)

self.t_conv1 = deconv(conv_dim*8, conv_dim*4, 4)

self.t_conv2 = deconv(conv_dim*4, conv_dim*2, 4)

self.t_conv3 = deconv(conv_dim*2, conv_dim, 4)

self.t_conv4 = deconv(conv_dim, 3, 4, batch_norm=False)

def forward(self, x):

"""

Forward propagation of the neural network

:param x: The input to the neural network

:return: A 32x32x3 Tensor image as output

"""

# define feedforward behavior

out = self.fc(x)

out = out.view(-1, self.conv_dim*8, 2, 2) # (batch_size, depth, 4, 4)

out = F.relu(self.t_conv1(out))

out = F.relu(self.t_conv2(out))

out = F.relu(self.t_conv3(out))

# last layer: tanh activation instead of relu

out = self.t_conv4(out)

out = F.tanh(out)

return out

"""

DON'T MODIFY ANYTHING IN THIS CELL THAT IS BELOW THIS LINE

"""

tests.test_generator(Generator)

Tests Passed

Initialize the weights of your networks

To help your models converge, you should initialize the weights of the convolutional and linear layers in your model. From reading the original DCGAN paper, they say:

All weights were initialized from a zero-centered Normal distribution with standard deviation 0.02.

So, your next task will be to define a weight initialization function that does just this!

You can refer back to the lesson on weight initialization or even consult existing model code, such as that from the networks.py file in CycleGAN Github repository to help you complete this function.

Complete the weight initialization function

- This should initialize only convolutional and linear layers

- Initialize the weights to a normal distribution, centered around 0, with a standard deviation of 0.02.

- The bias terms, if they exist, may be left alone or set to 0.

from torch.nn import init

def weights_init_normal(m):

"""

Applies initial weights to certain layers in a model: convolutional and linear

The weights are taken from a normal distribution

with mean = 0, std dev = 0.02.

:param m: A module or layer in a network

"""

# classname will be something like:

# `Conv`, `BatchNorm2d`, `Linear`, etc.

classname = m.__class__.__name__

isConvolution = classname.find('Conv') != -1

isLinear = classname.find('Linear') != -1

if (hasattr(m, 'weight') and isConvolution or isLinear):

init.normal_(m.weight.data, 0.0, 0.02)

Build complete network

Define your models’ hyperparameters and instantiate the discriminator and generator from the classes defined above. Make sure you’ve passed in the correct input arguments.

def build_network(d_conv_dim, g_conv_dim, z_size):

# define discriminator and generator

D = Discriminator(d_conv_dim)

G = Generator(z_size=z_size, conv_dim=g_conv_dim)

# initialize model weights

D.apply(weights_init_normal)

G.apply(weights_init_normal)

print(D)

print()

print(G)

return D, G

Define model hyperparameters

# Define model hyperparams

d_conv_dim = 64

g_conv_dim = 64

z_size = 100

"""

DON'T MODIFY ANYTHING IN THIS CELL THAT IS BELOW THIS LINE

"""

D, G = build_network(d_conv_dim, g_conv_dim, z_size)

Discriminator(

(conv1): Sequential(

(0): Conv2d(3, 64, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1), bias=False)

)

(conv2): Sequential(

(0): Conv2d(64, 128, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1), bias=False)

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(conv3): Sequential(

(0): Conv2d(128, 256, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1), bias=False)

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(conv4): Sequential(

(0): Conv2d(256, 512, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1), bias=False)

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(classifier): Linear(in_features=2048, out_features=1, bias=True)

)

Generator(

(fc): Linear(in_features=100, out_features=2048, bias=True)

(t_conv1): Sequential(

(0): ConvTranspose2d(512, 256, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1), bias=False)

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(t_conv2): Sequential(

(0): ConvTranspose2d(256, 128, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1), bias=False)

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(t_conv3): Sequential(

(0): ConvTranspose2d(128, 64, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1), bias=False)

(1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(t_conv4): Sequential(

(0): ConvTranspose2d(64, 3, kernel_size=(4, 4), stride=(2, 2), padding=(1, 1), bias=False)

)

)

Training on GPU

Check if you can train on GPU. Here, we’ll set this as a boolean variable train_on_gpu. Later, you’ll be responsible for making sure that

- Models,

- Model inputs, and

- Loss function arguments

Are moved to GPU, where appropriate.

import torch

# Check for a GPU

train_on_gpu = torch.cuda.is_available()

if not train_on_gpu:

print('No GPU found. Please use a GPU to train your neural network.')

else:

print('Training on GPU!')

No GPU found. Please use a GPU to train your neural network.

Discriminator and Generator Losses

Now we need to calculate the losses for both types of adversarial networks.

Discriminator Losses

- For the discriminator, the total loss is the sum of the losses for real and fake images,

d_loss = d_real_loss + d_fake_loss.

- Remember that we want the discriminator to output 1 for real images and 0 for fake images, so we need to set up the losses to reflect that.

Generator Loss

The generator loss will look similar only with flipped labels. The generator’s goal is to get the discriminator to think its generated images are real.

Exercise: Complete real and fake loss functions

You may choose to use either cross entropy or a least squares error loss to complete the following real_loss and fake_loss functions.

def real_loss(D_out):

'''Calculates how close discriminator outputs are to being real.

param, D_out: discriminator logits

return: real loss'''

batch_size = D_out.size(0)

labels = torch.ones(batch_size)

if train_on_gpu:

labels = labels.cuda()

criterion = nn.BCEWithLogitsLoss()

loss = criterion(D_out.squeeze(), labels)

return loss

def fake_loss(D_out):

'''Calculates how close discriminator outputs are to being fake.

param, D_out: discriminator logits

return: fake loss'''

batch_size = D_out.size(0)

labels = torch.zeros(batch_size)

if train_on_gpu:

labels = labels.cuda()

criterion = nn.BCEWithLogitsLoss()

loss = criterion(D_out.squeeze(), labels)

return loss

Optimizers

Define optimizers for your Discriminator (D) and Generator (G)

Define optimizers for your models with appropriate hyperparameters.

import torch.optim as optim

lr = 0.0002

beta1=0.5

beta2=0.999 # default value

# Create optimizers for the discriminator D and generator G

d_optimizer = optim.Adam(D.parameters(), lr, [beta1, beta2])

g_optimizer = optim.Adam(G.parameters(), lr, [beta1, beta2])

Training

Training will involve alternating between training the discriminator and the generator. You’ll use your functions real_loss and fake_loss to help you calculate the discriminator losses.

- You should train the discriminator by alternating on real and fake images

- Then the generator, which tries to trick the discriminator and should have an opposing loss function

Saving Samples

You’ve been given some code to print out some loss statistics and save some generated “fake” samples.

Complete the training function

Keep in mind that, if you’ve moved your models to GPU, you’ll also have to move any model inputs to GPU.

def train(D, G, n_epochs, print_every=50):

'''Trains adversarial networks for some number of epochs

param, D: the discriminator network

param, G: the generator network

param, n_epochs: number of epochs to train for

param, print_every: when to print and record the models' losses

return: D and G losses'''

# move models to GPU

if train_on_gpu:

D.cuda()

G.cuda()

# keep track of loss and generated, "fake" samples

samples = []

losses = []

# Get some fixed data for sampling. These are images that are held

# constant throughout training, and allow us to inspect the model's performance

sample_size=16

fixed_z = np.random.uniform(-1, 1, size=(sample_size, z_size))

fixed_z = torch.from_numpy(fixed_z).float()

# move z to GPU if available

if train_on_gpu:

fixed_z = fixed_z.cuda()

# epoch training loop

for epoch in range(n_epochs):

# batch training loop

for batch_i, (real_images, _) in enumerate(celeba_train_loader):

batch_size = real_images.size(0)

real_images = scale(real_images)

# ===============================================

# YOUR CODE HERE: TRAIN THE NETWORKS

# ===============================================

# 1. Train the discriminator on real and fake images

d_optimizer.zero_grad()

if train_on_gpu:

real_images = real_images.cuda()

D_real = D(real_images)

d_real_loss = real_loss(D_real)

z = np.random.uniform(-1, 1, size = (batch_size, z_size))

z = torch.from_numpy(z).float()

if train_on_gpu:

z = z.cuda()

fake_images = G(z)

D_fake = D(fake_images)

d_fake_loss = fake_loss(D_fake)

d_loss = d_real_loss + d_fake_loss

d_loss.backward()

d_optimizer.step()

# 2. Train the generator with an adversarial loss

g_optimizer.zero_grad()

z = np.random.uniform(-1, 1, size = (batch_size, z_size))

z = torch.from_numpy(z).float()

if train_on_gpu:

z = z.cuda()

fake_images = G(z)

D_fake = D(fake_images)

g_loss = real_loss(D_fake)

g_loss.backward()

g_optimizer.step()

# ===============================================

# END OF YOUR CODE

# ===============================================

# Print some loss stats

if batch_i % print_every == 0:

# append discriminator loss and generator loss

losses.append((d_loss.item(), g_loss.item()))

# print discriminator and generator loss

print('Epoch [{:5d}/{:5d}] | d_loss: {:6.4f} | g_loss: {:6.4f}'.format(

epoch+1, n_epochs, d_loss.item(), g_loss.item()))

## AFTER EACH EPOCH##

# this code assumes your generator is named G, feel free to change the name

# generate and save sample, fake images

G.eval() # for generating samples

samples_z = G(fixed_z)

samples.append(samples_z)

G.train() # back to training mode

# Save training generator samples

with open('train_samples.pkl', 'wb') as f:

pkl.dump(samples, f)

# finally return losses

return losses

Set your number of training epochs and train your GAN!

# set number of epochs

n_epochs = 2

"""

DON'T MODIFY ANYTHING IN THIS CELL

"""

# call training function

losses = train(D, G, n_epochs=n_epochs)

Epoch [ 1/ 2] | d_loss: 1.5885 | g_loss: 2.4595

Epoch [ 1/ 2] | d_loss: 0.1484 | g_loss: 5.3448

Epoch [ 1/ 2] | d_loss: 0.3365 | g_loss: 4.0576

Epoch [ 1/ 2] | d_loss: 0.2162 | g_loss: 4.7692

Epoch [ 1/ 2] | d_loss: 0.3506 | g_loss: 3.3385

Epoch [ 1/ 2] | d_loss: 0.7388 | g_loss: 5.3848

Epoch [ 1/ 2] | d_loss: 0.6447 | g_loss: 4.5798

Epoch [ 1/ 2] | d_loss: 1.0489 | g_loss: 1.5225

Epoch [ 1/ 2] | d_loss: 0.7226 | g_loss: 3.1539

Epoch [ 1/ 2] | d_loss: 0.8058 | g_loss: 1.9219

Epoch [ 1/ 2] | d_loss: 0.5621 | g_loss: 4.2040

Epoch [ 1/ 2] | d_loss: 0.7327 | g_loss: 3.2319

Epoch [ 1/ 2] | d_loss: 0.4906 | g_loss: 3.5896

Epoch [ 1/ 2] | d_loss: 0.7595 | g_loss: 2.5536

Epoch [ 1/ 2] | d_loss: 0.7745 | g_loss: 4.1521

Epoch [ 2/ 2] | d_loss: 0.9642 | g_loss: 4.7045

Epoch [ 2/ 2] | d_loss: 0.6971 | g_loss: 3.6240

Epoch [ 2/ 2] | d_loss: 0.6387 | g_loss: 4.3250

Epoch [ 2/ 2] | d_loss: 0.7282 | g_loss: 3.6856

Epoch [ 2/ 2] | d_loss: 0.7808 | g_loss: 2.7164

Epoch [ 2/ 2] | d_loss: 0.9620 | g_loss: 4.1325

Epoch [ 2/ 2] | d_loss: 0.7033 | g_loss: 4.0450

Epoch [ 2/ 2] | d_loss: 0.5007 | g_loss: 2.7120

Epoch [ 2/ 2] | d_loss: 0.8774 | g_loss: 2.0234

Epoch [ 2/ 2] | d_loss: 0.6263 | g_loss: 3.2441

Epoch [ 2/ 2] | d_loss: 0.9937 | g_loss: 1.4169

Epoch [ 2/ 2] | d_loss: 0.5905 | g_loss: 3.7324

Epoch [ 2/ 2] | d_loss: 0.5508 | g_loss: 2.3028

Epoch [ 2/ 2] | d_loss: 0.7045 | g_loss: 2.2604

Epoch [ 2/ 2] | d_loss: 0.5300 | g_loss: 3.0362

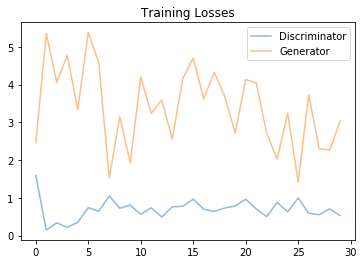

Training loss

Plot the training losses for the generator and discriminator, recorded after each epoch.

fig, ax = plt.subplots()

losses = np.array(losses)

plt.plot(losses.T[0], label='Discriminator', alpha=0.5)

plt.plot(losses.T[1], label='Generator', alpha=0.5)

plt.title("Training Losses")

plt.legend()

<matplotlib.legend.Legend at 0x12ba429e8>

Generator samples from training

View samples of images from the generator, and answer a question about the strengths and weaknesses of your trained models.

# helper function for viewing a list of passed in sample images

def view_samples(epoch, samples):

fig, axes = plt.subplots(figsize=(16,4), nrows=2, ncols=8, sharey=True, sharex=True)

for ax, img in zip(axes.flatten(), samples[epoch]):

img = img.detach().cpu().numpy()

img = np.transpose(img, (1, 2, 0))

img = ((img + 1)*255 / (2)).astype(np.uint8)

ax.xaxis.set_visible(False)

ax.yaxis.set_visible(False)

im = ax.imshow(img.reshape((32,32,3)))

# Load samples from generator, taken while training

with open('train_samples.pkl', 'rb') as f:

samples = pkl.load(f)

_ = view_samples(-1, samples)

Recommended next steps – improvements

Increase number of epochs. I only run 2 because I was on CPU.

Create a deep model and use it to generate larger (say 128×128) images of faces.

Read existing literature to see if you can use padding and normalization techniques to generate higher-resolution images.

Implement a learning rate that evolves over time as they did in this https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix

See if you can extend this model and use a CycleGAN to learn to swap different kinds of faces. For example, learn a mapping between faces that have and do not have eye/lip makeup, as they did in https://gfx.cs.princeton.edu/pubs/Chang_2018_PAS/Chang-CVPR-2018.pdf

Leave a Reply